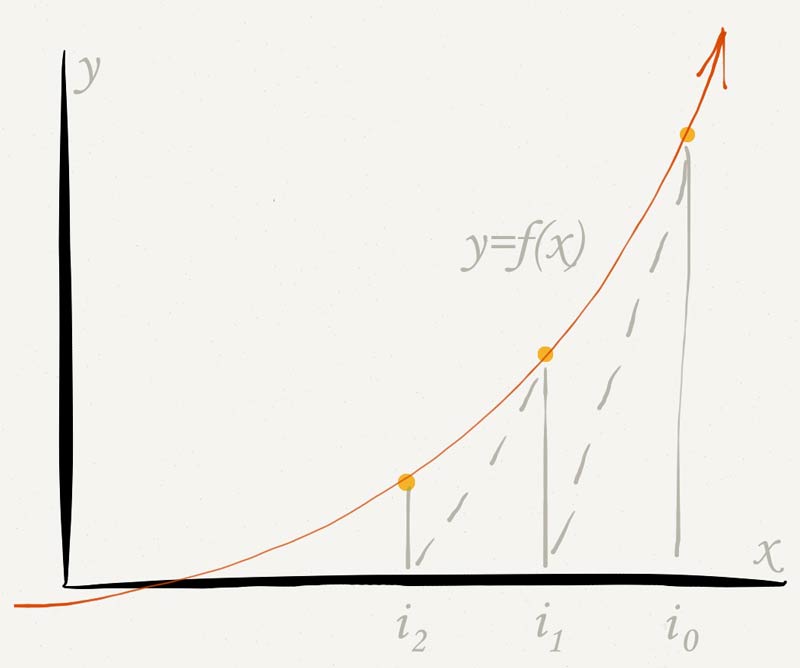

(The iterations would diverge further, but I cut them off at some point. Notice, below, that the first point iterates stably, whereas the second one iterates unstably. If the function is quadratic, this the 'optimal' update in that in converges in one step. Let's consider a function $f(x) = \operatorname, In a sense, Newton Raphson is automatically doing the adaptive step size its adapting the step in each dimension (which changes the direction) according to the rate of change of the gradient. I'd just like to add a concrete example of weird behavior of which the Ian's answer speaks. In this problem with your initial guess, that eventually happens, because the system eventually finds its way just slightly to the right of the extremum on the right, which sends it far off to the left. At that point things will usually work out (not always, though). Of course you may eventually find an occasion where there are an even number of extrema in the way, and then you manage to skip over all of them and get to the right side. If you have an even number of extrema in the way, then you will start going the right way, but you may later find yourself in a spot with an odd number of extrema in the way, leading to problems later. If you have an odd number of extrema in the way, then you will start going away from the root you want, as you see here.

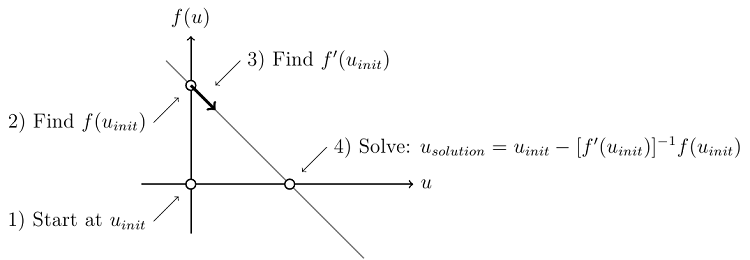

One qualitative property is that, in the 1D case, you should not have an extremum between the root you want and your initial guess. Far away from the root you can have highly nontrivial dynamics. Its convergence theory is for "local" convergence which means you should start close to the root, where "close" is relative to the function you're dealing with. But occasionally it can be difficult or even impossible to resolve an equation analytically. Or that if ln (x) - 1 0, x equals the number e. For instance, if x2 - x 1, we know that x must be either 1 or -1. Newton's method does not always converge. The Newton-Raphson method explained Ever since primary school, we were told that an equation is something that can be resolved by analysis. Linear multi-step methods: consistency, zero-stability and convergence absolute stability.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed